You Did Not Get a PhD

to Check DOI Links

Verifying reference lists is mechanical work.

Let a machine do it. Get your afternoon back.

The Real Cost of AI-Generated Manuscripts

AI-assisted writing has made one problem significantly worse: fabricated references. AI tools hallucinate citations — plausible-sounding papers with real journal names, credible author names, and properly formatted DOIs that lead nowhere.

Submitting authors often do not check. They trusted the AI. This means the burden has shifted to you — the reviewer, the thesis committee, the editor — to catch what should never have passed the author's own desk.

Manually verifying 30–50 references takes 1–2 hours. That is time you are not spending on the scientific evaluation, your own research, or anything else.

The problem extends beyond manuscripts where AI was used to generate references. Any use of AI for editing, rephrasing, or polishing text — improving a conclusion, tightening an abstract, rewording a methods section — can result in citations being silently modified. This is not a flaw or bad faith on the author's part. It is intrinsic to how generative models work: they produce plausible text, they do not retrieve verified facts. The author may be entirely unaware that a citation changed. It is still your problem to catch.

What AiCitationChecker Does in 2 Minutes

Checks every reference

CrossRef, OpenAlex, and Semantic Scholar — three major academic databases cross-referenced for every citation in the list.

Flags specific problems

Invalid DOIs, author mismatches, title–author combinations that don't exist, year discrepancies, wrong journal metadata. Not just "not found" — specific, actionable flags.

Suggests corrections and reformats

When the correct paper can be identified, AiCitationChecker provides the verified citation reformatted from CrossRef metadata — the authoritative record, not the author's version. Export the complete verified list in any citation style (APA, IEEE, Chicago, Harvard, Vancouver, MDPI) as a Word document.

The Patterns It Catches

- Non-existent papers — no match in any academic database, despite plausible-sounding metadata

- DOI exists, wrong paper — the DOI resolves, but to a completely different publication: different title, different authors, different year

- Correct title, fabricated authors — the paper exists; the listed authors did not write it

- Real paper, wrong journal or year — metadata inconsistencies that would pass a superficial check

- Duplicated references — the same paper listed twice under different formats

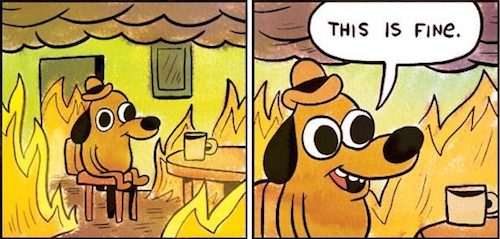

Making groceries ...

Calling the plumber ...

Deadline in 47 min ...

Just 113 citations to check.

How Academics Use It

Peer review

Run the reference list before your evaluation. Fabricated citations become documented findings — not opinions. 2 minutes, not 2 hours.

Thesis examination

Check the bibliography before the defence. Hallucinated references are objective, verifiable facts — not suspicions.

Editorial screening

First-pass check at intake. Desk-reject manuscripts with fabricated references before they consume reviewer time.

Own manuscript

Verify your AI-assisted literature review before submission. A clean reference list reflects the quality of the work.

Journal editors

Spot fabricated references before a manuscript enters your workflow. Flag problematic submissions at intake — before they damage your journal's credibility.

Student support

Run a quick check on submitted bibliographies. A hallucinated reference gives you a concrete teaching moment — not a vague conversation about "AI reliability."

Why Fabricated References Are Not a Minor Issue

A fabricated reference is not an opinion or an estimate. It is a verifiable fact: the paper either exists in academic databases or it does not. Unlike AI-text detection — which is probabilistic, contested, and legally challengeable — a missing citation is binary, objective, and documentable.

Published papers with fabricated references have been retracted. Authors have faced misconduct proceedings. Journals and institutions that failed to catch them have absorbed reputational damage. The tools now exist to catch this at the review stage. Using them is due diligence.

Reference verification is exactly the kind of mechanical, high-stakes, repetitive task that machines do better than humans — faster, more consistently, without fatigue. Your expertise is for what comes after: evaluating the science.

Free to Start. Priced for What It Saves.

A single peer review takes at least 1–2 hours of reference checking. At any academic hourly rate, the tool pays for itself on the first use. The question is not whether you can afford it — it is whether you can afford to keep doing it manually.

Free — $0

Daily credit allowance — enough for a typical reference list. Refreshed every day. No credit card, no subscription. Start here.

Silver — $9.99

90 days of Regular access + 2,500 credits. One-time purchase, no auto-renewal. Covers dozens of full reference lists — thesis committees, editorial screening, regular peer review.

Gold — $19.99

180 days of Regular access + 6,000 credits. One-time purchase, no auto-renewal. For sustained high-volume use — journal editors, research groups, institutional workflows.

30 seconds to set up. 2 minutes to check a reference list.

Free account. No credit card.

Start Free Verification